How ChatGPT Will Die

First you have to understand recursion, and to understand recursion, you must understand recursion. But I can help you understand it.

I read academic papers for entertainment. Actually that’s not exactly true. I read them to get a glimpse of what the future might bring and perhaps how can I monetize it. I have come to realize that not it’s not gravity, but rather money makes the world go round. I know that I am going to get comments like “money can’t buy everything”, but neither can no money so they mathematically cancel each other out.

So my interest was piqued with the title of an academic paper. The words that caught my eye, was “The Curse of Recursion”. As a coder, you learn about recursion in computer science. However going to the dictionary is not that helpful. An online Oxford dictionary states that “recursion is the repeated application of a recursive procedure!” . See, I wasn’t kidding when I said that you have to understand recursion to understand recursion. But I can make it all clear for you without resorting to computer code. Do you have an Christian friends who when at a crossroads in Life, ask themselves what would Jesus do? Some of them may wear bracelets printed or engraved with “WWJD”. Well recursion is if Jesus asked himself “What would Jesus do?”. If you google “what is recursion in simple terms”, the answer is “Recursion means "defining a problem in terms of itself".

The second part of the title of the academic paper was more interesting. It proclaimed “Training on Generated Data Makes Models Forget”. It was filed under “Artificial Intelligence”. I immediately knew that they were talking about Large Language Models (LLMs) like ChatGPT and the wealth of content that they produce.

According to the latest available data, ChatGPT currently has over 100 million users. And the website currently generates 1.8 billion visitors per month. This user and traffic growth was achieved in a record-breaking three-month period (from February 2023 to April 2023). This LLM is used for everything from bypassing Google search to writing web content. A recent developer survey has shown that over 60% of non-native speaking web developers use ChatGPT to refine their web content. Even spammers are using it to improve their English in spam, phishing and scam emails.

This is where recursion comes in. If you consider web content as a widely varied pool of a human mosaic of thought, word and knowledge, then it is slowly being polluted by homogenous AI-written content. Even I am guilty of using AI written online descriptions of a marketing video player for websites that I coded. All of the content diversity generated by millions of minds, having millions of different motives, cultures, points of view, objectives, styles, abilities and means, have created a cosmopolitan human polyglot native stew of data, information and knowledge online. It is a fascinating process. But what happens if you start diluting this with homogenized pablum that has been through the milquetoast mixmaster of a Large Language Models. In a lot of cases it will improve grammar usage but the long term effect is more insidious. What will happen to GPT-{n} once LLMs contribute much of the language found online? All LLMs are trained from massive scrapings of online data. Someone suggest having a direct data stream feeding LLMs, but the data stream will be polluted with recursively generated verbiage. That is the curse of recursion. It’s like mixing a cocktail and using that cocktail as a base to mix another cocktail and so on, until you have no alcohol and all mixer.

Some researchers from the University of Oxford, University of Cambridge, Imperial College London, University of Toronto & Vector Institute and the University of Edinburgh decided to find out what happens when you have a mix of recursive inputs into LLMs.

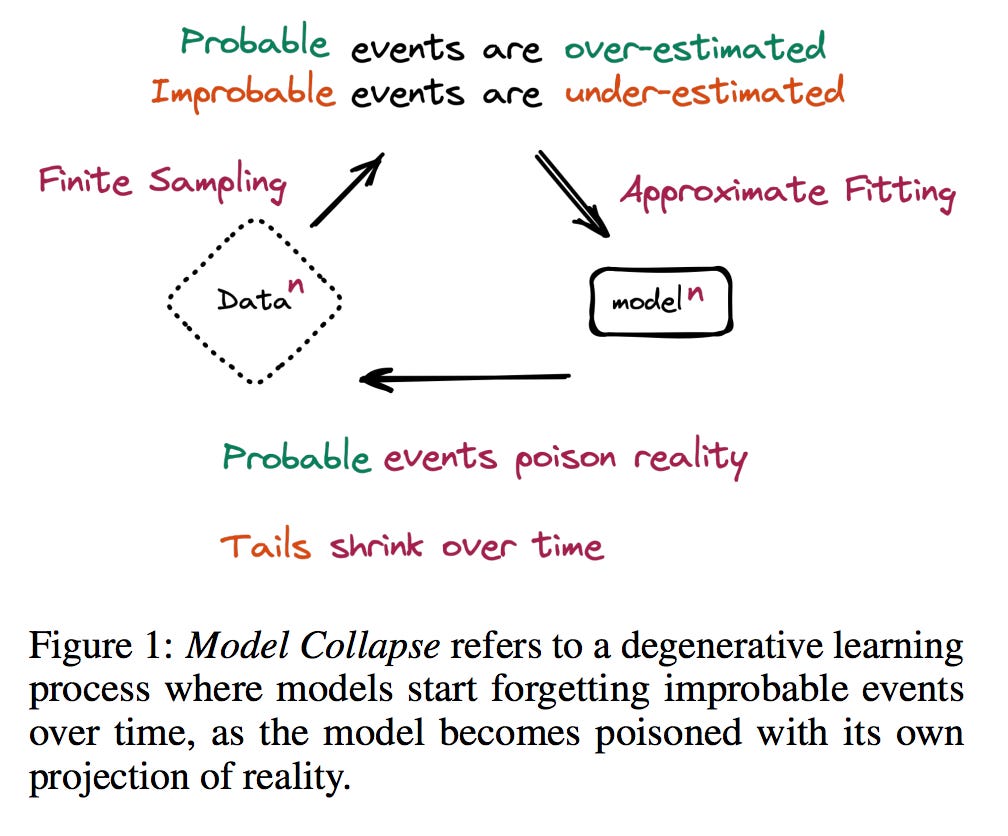

As you know, as quantum physics has taught us, everything has a probability distribution in life in all aspects. At any given moment, there is a probability that I will be murdered, win the lottery, get raped, write a Substack post, attempt to learn a new language or eat a ham sandwich for lunch (with Oka cheese, tomato, mayo, arugula, onion on a toasted brioche bun). All of these probabilities are in the probability distribution of my life. The more improbable events are in the tails of the probability distribution curve. LLMs have a probability distribution of what they output. A parameter called “temperature” controls how they respond to prompts. A high temperature means that they stick to strictly the facts, but they are very mechanical and boring. If the LLM doesn’t have the requisite data, it lowers the “temperature” and starts considering lower probability things. If the “temperature” gets low enough, it starts making things up, like the time it said that porcelain shard were a good additive to breast milk because of the calcium content of the shards. With the ingestion of recursively self-generated content, the first symptom is that probability tails shrink over time as per the diagram below:

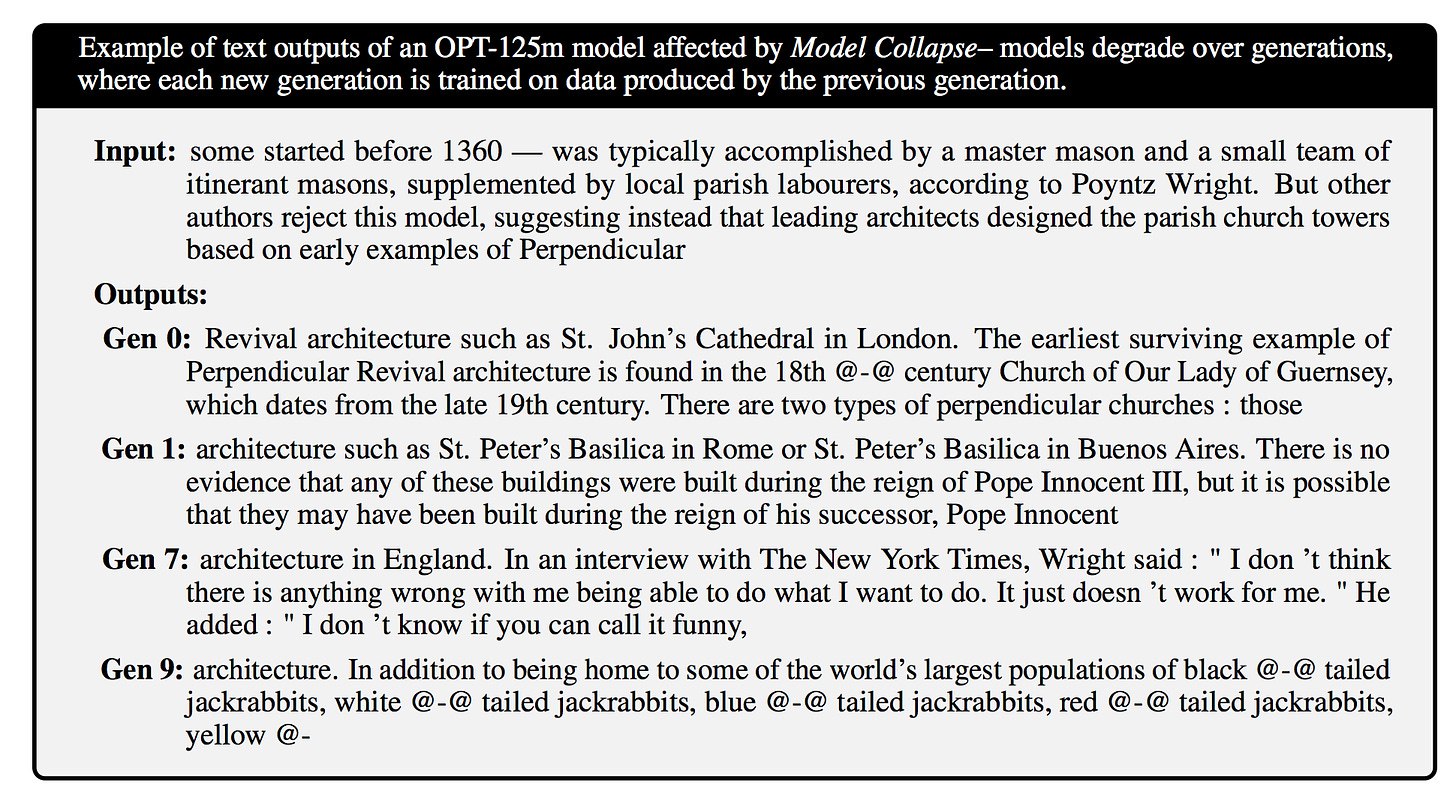

From there, it gets worse. As the LLM continues to feed on its own sputum, catastrophic forgetting takes place and intense hallucinations start to become common place. It’s like inbreeding in biological systems until freaks are born. Take a look at this generational progress of unlearning from the academic paper:

I would definitely like to see a red-assed tailed jackrabbits just for the comedic interlude, but I wouldn’t trust the LLM to write something serious for me.

And then it struck me. This is how ChatGPT is going to die. Total model collapse. Just like the inbred Hapsburg dynasty in Europe who inbred themselves into oblivion, LLMs will go the same way.

So is there hope? You bet your sweet bippy. LLMs will be deposed by Small Language Models and Few Shot Learners. Busting the myth that German engineers are only good at hardware, researchers at the Center for Information and Language Processing, LMU Munich, Germany have come up with a Small Language Model, Few Shot Learner that outperforms GPT-3 although it is much “greener” in that it has three orders of magnitude fewer parameters.

So here is the massive opportunity, both in technology and in investing. It will be in creating multiple specific AI machines that are expert in their domains with monitored zero knowledge drift. I can see a marketplace for these, where an enterprising group of entrepreneurs start creating AI experts and selling them, or their services in an online marketplace. And the websites will be written by AI as well.

Here’s the link to the academic paper on model collapse: https://arxiv.org/pdf/2305.17493v2.pdf

The thing that excites me the most about where this is all going, is the plethora of business opportunities, previously unimagined that this will open up in the very near future. The key word is unimagined. I recently scraped a small newspaper from the 1950’s for references to my grandparents in the social columns. In among the content filler blurbs at the end of articles, there was a conjecture that one day, mail would be delivered by rockets. It couldn’t be imagined that mail would be delivered over a wire or even over the air. The future in the next few years is just as unimaginable.

Thanks for reading and subscribe if you would like more insights gleaned from my web scrapers and Natural Language Processing tools.

Happy Father’s Day to those appropriate individuals.

The takeaway here, in layman terms is..

Real men take their Whiskey/Whisky straight. Mixing mix into the pinch of libation just dilutes the usefulness..